The reality gap: testing AI from compliance to enforcement

Companies and regulators often operate with distinct immediate objectives, e.g. profit maximisation versus consumer protection. Yet, as artificial intelligence (AI) increasingly permeates the economy, both sides face a shared challenge: navigating the opacity of complex algorithms.

For companies, the primary imperative is risk management. A newly deployed algorithm that inadvertently breaches competition law or introduces consumer bias can easily become a catalyst for significant regulatory scrutiny, legal claims, financial damages, and reputational risk. For regulators, the complexity of AI systems presents a challenge for effective oversight, consumer protection, and ensuring proper competition in the market. The emerging common ground for both stakeholders is the use of ‘testing sandboxes’—controlled, simulated environments where complex AI systems can be evaluated safely.

Historically viewed as ex ante tools—such as the AI Regulatory Sandboxes (AIRS) established under the EU’s AI Act, or the testing frameworks emphasised in the US AI Action Plan—sandbox environments are now rapidly becoming vital instruments of ex post enforcement.1, 2 Under regimes spanning conduct regulation, GDPR, equal pay, competition law, and the EU’s Digital Markets Act (DMA), authorities are increasingly requesting access to testing environments to audit algorithms and gather forensic evidence.

Yet, whether a sandbox is built voluntarily by a firm to stress-test internal compliance or mandated externally for an investigation, a fundamental question persists: to what extent can a simulated environment genuinely replicate the complexities of the live market?

The illusion of the perfect mirror

From an economic standpoint, a regulator’s expectation of high fidelity is often reasonable. The value of algorithmic testing lies in the behaviour observed in a controlled setting reliably predicting real-world outcomes.3 If a simplified sandbox systematically masks risks, relies on highly aggregated data, or fails to capture the edge-cases of real consumer behaviour, it risks creating a false sense of security for the firm and an incomplete picture for the regulator.4

It is therefore understandable that authorities seek test environments that closely mirror true market conditions. However, from an engineering perspective, achieving a perfect replica presents a significant operational and computing challenge. For example, a modern digital platform is not a standalone software component that can be easily duplicated offline. It is a dynamic ecosystem where multiple ranking algorithms interact continuously with live advertising auctions, dynamic pricing models, and real-time user feedback loops.

Populating an AI sandbox introduces substantial friction. To comply with privacy frameworks such as the GDPR, firms must generate synthetic data that accurately reflects user populations without compromising individual privacy. Creating a high-fidelity sandbox thus represents a major expenditure, making the demand for a perfect clone of a live digital economy a disproportionate and often technically infeasible undertaking.

A further consideration is time. Regulatory investigations are often subject to statutory or administrative deadlines, introduced in part to prevent proceedings from extending indefinitely. Within these bounded time horizons, the pursuit of a perfect sandbox replica may be doubly impractical: not only does the engineering challenge remain formidable, but the time available to resolve it is itself constrained.

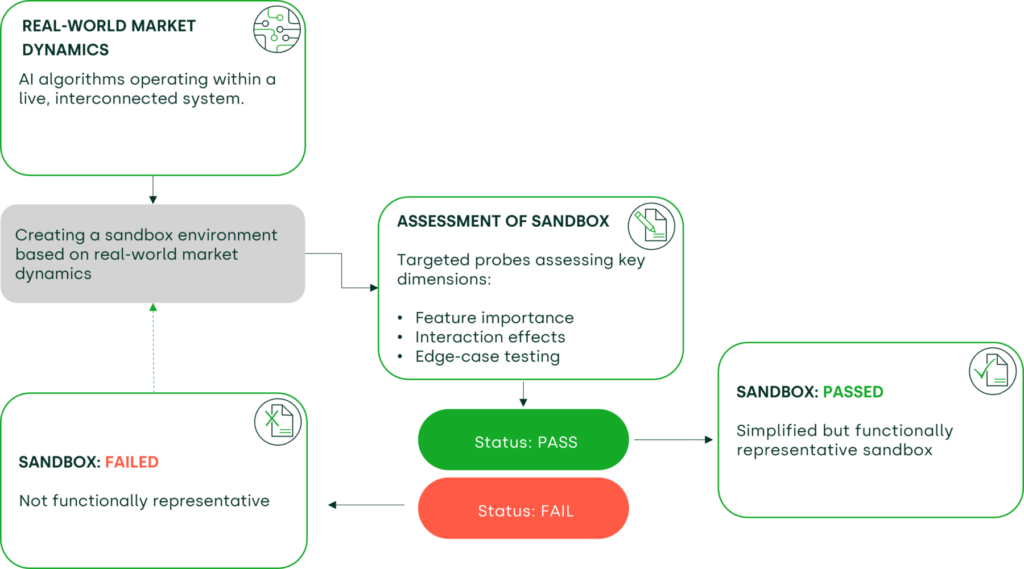

Figure 1 Diagnostic probes assess whether a simplified sandbox captures the key dimensions of real-world market dynamics

The crucible of a regulatory investigation

This tension becomes particularly acute during active enforcement. Consider a regulatory investigation under the DMA assessing whether a ‘gatekeeper’ marketplace exhibits self-preferencing—systematically favouring its own branded products over third-party offers without objective economic justification.

To assess this rigorously, the algorithm must be observed processing search queries and purchase histories that reflect the full diversity of consumer usage. If the firm, constrained by privacy rules and resource costs, provides a sandbox reliant on heavily aggregated data, there is a risk of underestimating the extent of any preferential treatment. Yet, if building a flawless mirror is practically impossible, how can regulators gain confidence in the simulated results?

Econometrics as a practical truce

Regulators and companies face a dilemma: demanding architectural perfection may stall investigations in prolonged engineering delays, while accepting an overly simplified environment risks yielding unreliable evidence.

The solution may lie less in software engineering and more in applied statistics. Rather than insisting on a flawless replica, both parties can seek to mathematically quantify the distortion. A practical approach to achieving this is through ‘feature importance analysis’—an econometric technique that identifies which factors most strongly predict outcomes in both the sandbox and the live environment.

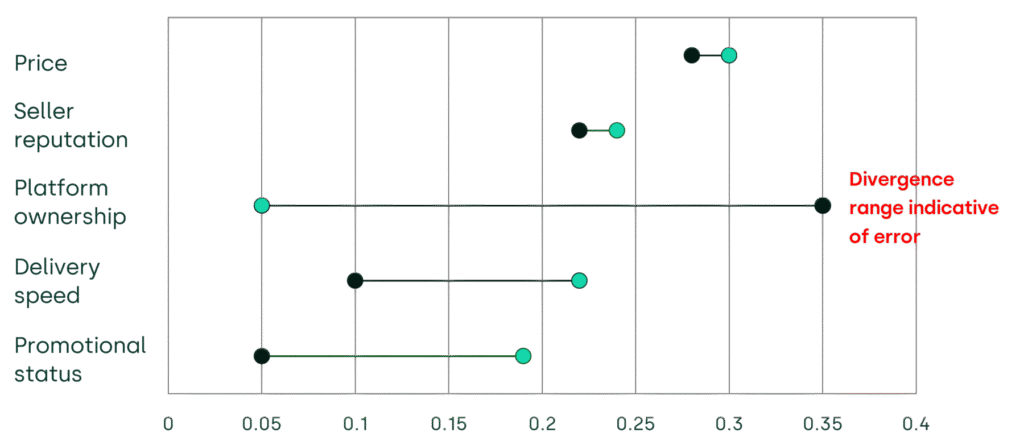

By analysing how variables such as price, seller reputation, and platform ownership influence rankings, economic experts can assess whether the sandbox accurately captures the system’s core commercial mechanics. If platform ownership is the dominant predictor of high rankings in the live market (e.g. yielding a high importance score of 0.35) but is negligible in the sandbox (scoring just 0.05), this divergence indicates that the test environment may be artificially constrained (see Figure 2).

Conversely, if the primary predictors align across both environments—even within a scaled-down sandbox—both compliance officers and external authorities can place greater confidence in the findings. This approach allows investigators to stress-test specific contexts, running separate analyses for fiercely competitive product categories to map precisely where the sandbox diverges from reality. Crucially, this allows experts to test for interaction effects—such as whether platform ownership combined with promotional campaigns yields vastly different outcomes in the sandbox compared to the live environment, flagging critical gaps in system integration.

Figure 2 Stylised example divergence between live and sandbox environments on a key variable (platform ownership)

A framework for proportionality

Ultimately, a regulatory sandbox is both a technical tool and an economic compromise. It represents a space between a regulator’s need for transparency and a firm’s operational realities.

For technology companies, employing econometric techniques such as feature importance analysis offers a rigorous method to validate internal compliance tests, satisfying regulatory requirements without building prohibitively expensive platform replicas. For regulators and courts, it provides an empirically grounded framework for determining the evidential weight of simulated testing in high-stakes adjudications.

As algorithmic testing becomes a central pillar of digital governance, the dialogue between stakeholders must evolve. Acknowledging the economic friction involved in sandbox creation, while maintaining rigorous testing standards, is essential. By shifting the focus from building perfect replicas to systematically measuring their accuracy, both regulators and firms can ensure that these tools deliver meaningful accountability, grounded in economic reality.

Footnotes

1 Due, S.A., Shah, H., Moraes, T., Genicot, N. and Canter, M. (2024), ‘Sandboxing Artificial Intelligence: Balancing Innovation, Regulation, and Stakeholder Needs’, FARI – AI for the Common Good Institute Brussels, Tech. Rep. White Paper based on workshops by CAIRNE and FARI. See also EU Artificial Intelligence Act, Article 57, https://artificialintelligenceact.eu/article/57/

2 US White House Office of Science and Technology Policy (July 2025), ‘Winning the race: America’s AI Action Plan’, The White House, Tech. Rep. See https://www.whitehouse.gov/wp-content/uploads/2025/07/Americas-AI-Action-Plan.pdf.

3 Appaya, M.S., Gradstein, H.L., and Haji Kanz, M. (2020), ‘Global experiences from regulatory sandboxes’, World Bank. See https://documents.worldbank.org/en/publication/documents-reports/documentdetail/912001605241080935/Global-Experiences-from-Regulatory-Sandboxes.

4 Organisation for Economic Co-operation and Development (2025), ‘Regulatory sandbox toolkit’, Section on sandbox design challenges and quality assurance.

Related

Draft merger guidelines: can we ensure dynamic and innovative markets flourish in Europe?

The European Commission’s draft merger guidelines are one of the most important ongoing debates in European policy. Nearly two decades have passed since the guidelines were last reviewed, and the new draft draws upon years of practice and precedent — reassessing core concerns around market power and consumer harm… Read More

Ofgem’s RIIO-ED3 SSMD: what next for GB electricity distribution networks

On 21 May 2026, Ofgem published its Sector Specific Methodology Decision (SSMD)1 for the forthcoming RIIO-ED3 (ED3) price control for GB electricity distribution network operators (DNOs). We explore some of the key themes in the Decision, ahead of the upcoming business planning period and the expected publication… Read More