Behavioural biases in the judiciary: food for thought?

Behavioural economics has taught us that human decision-making is not perfectly ‘rational’; so to what extent can we expect judges to be free from bias? We explore some recent literature on the topic and discuss potential implications. Although—as human beings—we can never be perfectly free from behavioural bias, our judicial processes can adopt measures to bolster fairness and accuracy in the decision-making process.

One visible effect of the current COVID-19 pandemic on our lives has been the requirement to wear masks in various public situations. As many of us have experienced, masks can make it very hard to accurately read a person’s facial expression, or even to recognise friends. One setting in which this may matter more than most is where a judge must consider the credibility of a witness. In some cases, this involves reading their ‘demeanour’—i.e. how truthful and reliable the witness appears to be. With many defendants, witnesses and experts now potentially appearing in a mask or in a video call, some have been calling for the legal profession to reconsider the use of demeanour in judging someone’s credibility.1 Some think that it introduces unconscious bias into judicial decision-making and does not necessarily help us get to the truth.

This matter relates to a much broader question: to what extent are judges susceptible to biases? Are their decisions always entirely evidence-based, rational and unbiased? And what could economics possibly have to say on the matter?

The framework: behavioural economics

Over the last 20 years, behavioural economics has been pushing further into the mainstream of economic thinking. Policymakers, researchers, regulators and businesses have all begun to take on board the usefulness of the framework and tools that behavioural economics offers.

One way of characterising this strand of economics is to say that it seeks to understand the factors that influence preferences, decision-making and choices. Under this framework, our brains process information in two ways. System 1 processing involves instinctive intuition, or the unconscious application of pre-learned skills, rather than conscious ‘thinking’. System 2 is a slower, more deliberative, evidence-based way of processing information.2

System 2 consumes more mental resources than System 1, which is why System 1 can be effective and efficient in many contexts. For example, at first, learning how to ride a bike requires conscious effort (System 2), but once the skill has been learned, one can rely much more on their System 1 to propel them forward—leaving System 2 to tackle new challenges. Moreover, in certain situations, simple ‘rules of thumb’ shortcuts (heuristics) or even ‘gut instinct’ can deliver the right answer.

However, our learning, instincts and rules of thumb are all susceptible to behavioural biases—which in turn can lead us into systematically suboptimal decisions. The easiest solution to adopt mentally may not be the best; indeed, in some instances, our brains naturally use System 1 when the better option is to employ System 2.

One context in which we might want a homo economicus (a totally rational, unbiased agent) is in judicial decision-making. These particular decisions often involve high stakes, and a liberal democratic society tends to demand an impartial judiciary. Indeed, judges take oaths swearing to make decisions carefully and free from any deliberate bias. For example, judges in the EU:3

shall […] take an oath to perform his duties impartially and conscientiously. [emphasis added]

However, given the amount of evidence on behavioural biases in human decision-making, might we be setting the bar too high—even for judges?

Extraneous factors in judicial decision-making?

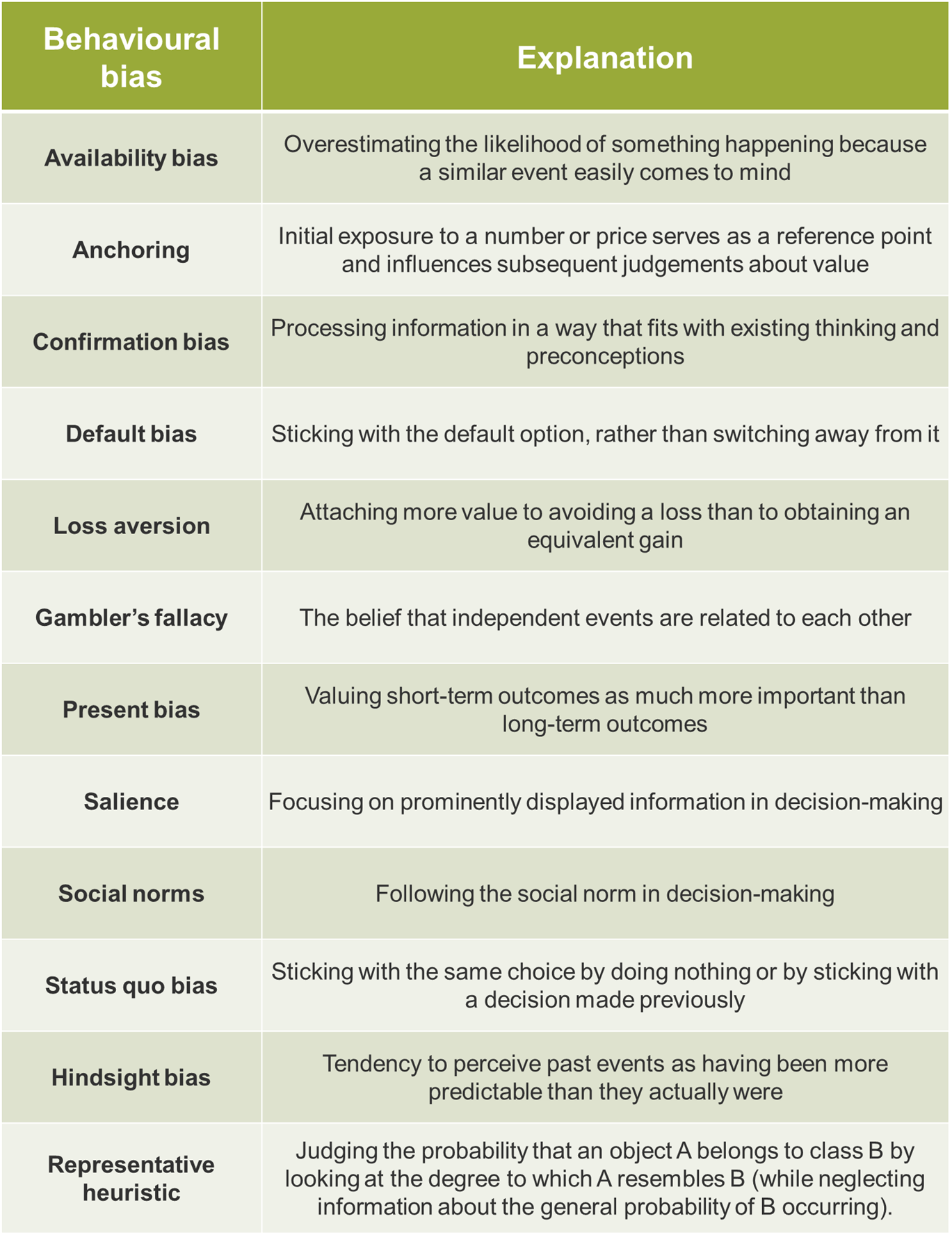

It is widely accepted that humans are cognitively limited, and thus prone to many behavioural biases. These biases have been well documented in a now vast literature, and some of the most well-known biases are summarised in Table 1.

Table 1 Selected behavioural biases

In terms of behavioural bias in judicial decision-making in particular, we can identify two ‘flavours’ of academic research. The first is ‘lab-based’, examining the extent to which judges display behavioural biases—in a controlled, hypothetical environment. The second type of research analyses large datasets of real-life judicial decisions for evidence of systematic bias. The unifying research question is: to what extent can extraneous factors affect the legal decisions that judges make?

‘Lab-based’ experiments versus the real world

A series of studies have attempted to test whether judges are susceptible to particular known behavioural biases. These tend to use a sample of 50–100 judges, with questions framed in particular ways in order to test for the effects of bias.

For example, some studies present legal experts with ‘realistic’ (but hypothetical) case materials and ask them to determine a sentence for the defendant. Different groups are given various anchors that were irrelevant to the merits of the case in question (ranging from a piece of journalism to a throw of a set of dice). Their results show that irrelevant sentencing anchors influenced the sentencing of legal professionals. Various other ‘lab’ experiments purport to show that judges are susceptible to hindsight bias and the representative heuristic, among other biases.

From these findings, authors tend to conclude that judges are susceptible to the same kinds of behavioural biases as the rest of us. However, there are several caveats to bear in mind before concluding that these biases find their way into the courtroom. These include the following.

- Sample size—such experiments tend to be based on relatively small samples of judges (typically between 50 and 100).

- Hypothetical scenarios—researchers can never conduct these experiments in the real-life context of judicial decision-making. There is a very real possibility that judges may take more care not to be influenced by such bias in the same way when deciding in an actual legal setting.

- Non-incentivised—relatedly, these experiments typically do not reward participants for getting the correct answer. However, within a professional context the stakes may be high enough that judges use more deliberation over intuition.

More recently, several studies have attempted to investigate the effect of extraneous factors on judicial decision-making in the real world. These researchers make use of large datasets of actual decisions made by judges (such as from asylum decisions, immigration judges and juvenile courts). The approach is to estimate a model to predict what influences the decision outcome. By controlling for many relevant factors, researchers can test to see whether an irrelevant factor shows any statistical significance in the model. If it does, this indicates that there was more factoring into the decision-making process than simple, brute facts.

Irrational, hungry judges?

One of the first (and most well-known) examples of a real-world study is a 2011 paper entitled ‘Extraneous factors in judicial decisions’.4 The authors collected data on over 1,000 Israeli parole request court rulings. In these cases, judges would typically process around 20 parole requests in a day, taking two food breaks at a time of their choosing. After controlling for various demographic and legal attributes, as well as judge-specific ‘fixed effects’, the authors found that a case was more likely to be rejected the later in a session it was heard. This is taken as evidence of ‘mental depletion’, since the option to reject a claim is the status quo option, which therefore takes less cognitive effort. This would seem to imply that what should be an irrelevant factor in determining the outcome of a judicial decision may in fact influence the judge’s choice.

The conclusions from this study only hold if the order of cases is exogenous to the timing of meal breaks—that is, if no other factor exists that increases both the chance of a case being heard later in a session and the chance of a negative decision. For example, one challenge to the study has been that the parole board tries to cover all cases from one prison in one session, and that prisoners unrepresented by an attorney usually go last and are less likely to be granted parole.5 In response to this critique, Danziger et al. (2011) reran their analysis, incorporating the comments, but were still able to replicate the original results.

In another critique,6 it has been argued that the effect could be produced by rational judges using only relevant information—judges have an approximate time limit for each session, and will avoid starting a new case if they expect that it will overrun. Since favourable rulings take longer than unfavourable ones, it is more likely that the last case in a session will be unfavourable (with the next session beginning with a favourable case).

However, when these types of critique are taken into account, they do not overturn the conclusion that ordering, mental depletion and extraneous factors influence judicial decisions; they merely suggest that the effect is likely to be less strong than was initially suggested.

Happy birthdays?

Another example comes from a 2020 paper entitled ‘Clash of Norms: Judicial Leniency on Defendant Birthdays’.7 In the paper, the authors test if judges are more lenient when hearing a case on the defendant’s birthday. As the authors point out, while judicial decisions are characterised by strong professional norms that are aimed to cultivate integrity and impede any influence from factors irrelevant to the case, birthdays are strongly associated with social norms.

Using millions of observations over several years in France and the USA, the authors investigated if professional norms can suppress social norms. Controlling for various factors, they ran a statistical model to establish whether sentencing decisions were affected by a defendant’s birthday. The results showed that on defendants’ birthdays, judges tend to assign 1% fewer sentences and decrease the length of sentence by 3%. However, they found that the effect fades for more severe crimes with longer sentences.

The authors postulate that their results are consistent with reference-dependent social preferences—where a person’s preferences depend on a comparison to a ‘reference point’ (for example, the status quo or some social comparison). In this case, the findings may be partially explained by guilt aversion theory. Guilt-averse decision-makers dislike ‘letting others down’. Here, a judge may believe that the defendant is expecting greater leniency on their birthday, and is therefore more likely to show some leniency.

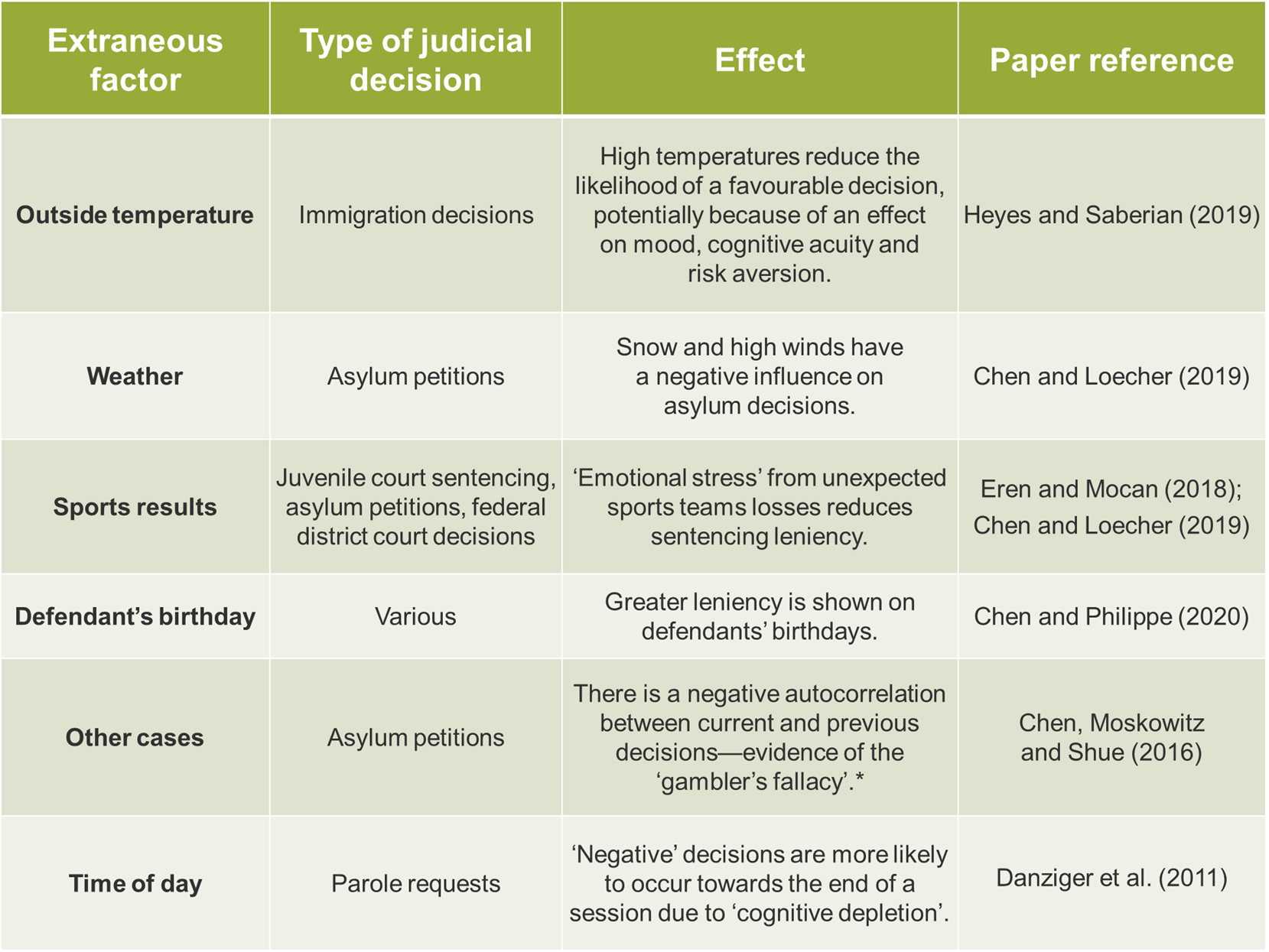

Using this method of finding factors that are statistically significant in models of judicial decisions based on large datasets, studies have reported that extraneous factors do influence judicial decisions in various contexts. Some examples are summarised in Table 2.

Table 2 Extraneous factors in judicial decisions

Case not so closed…

These studies have begun to show that even judges are susceptible to various biases, and that extraneous factors may influence judicial decisions in different situations. While the actual prevalence of this may be fairly small, the justice system is a context in which society particularly values fair, impartial and rational decision-making.

As noted above, there are certainly caveats to the findings in the literature. Indeed, there are various reasons to believe that judges are relatively well equipped to deal with behavioural biases:

- judges tend to be very experienced and highly motivated;

- judges go through a long process of education and ongoing training, parts of which may help them to recognise and address potential bias;

- decisions must be based on the evidence, and appeal processes can help to ensure that the final outcome is made with appropriate deliberation.

Nevertheless, judges are still human beings, and as such they can never be totally immune to behavioural biases. There are several ways of mitigating the effect of behavioural biases in judicial decision-making, several of which are already in place (to some extent):

- allowing judges more time to deliberate;

- giving judges less discretion;

- requiring judges to write opinions more often, nudging a greater use of deliberation over intuition;

- introducing further checks on decisions (e.g. appeal processes);

- making greater use of artificial intelligence (e.g. as an additional check that can flag when biases are particularly likely to be prevalent);8

- including behavioural science as part of judges’ education and ongoing training.

The precise effects of biases and possible interventions will be very much context-specific, and there will never be a silver bullet that will be able to ‘de-bias’ decision-making. However, in any context where humans make choices, it is worth asking ourselves whether any unconscious bias is hindering our ability to make the right decision. This is especially important when the stakes are high, as is the case in judicial decisions.

Indeed, we recognise that even academic behavioural scientists are prone to bias! For example, in the literature discussed above, results with a positive finding of bias in judicial decisions may be seen as more interesting and novel—and so are perhaps more likely to be published or mentioned in articles. Research that finds no evidence of bias might not receive the same level of interest.

Nevertheless, a greater understanding of behavioural economics can only be useful in helping our legal institutions to consider how to ensure fair, rational and accurate decisions continue to be made by our judges.

1 For example, see Simon-Kerr, J. A. (2020), ‘Unmasking demeanor’, George Washington Law Review, 88, pp. 158−74.

2 See, for example, Kahneman, D. (2011), Thinking, Fast and Slow, New York: Farrar, Straus and Giroux.

3 Article 2 of Protocol No. 3 on the Statute of the Court of Justice of the European Union.

4 Danziger, S., Levav, J. and Avnaim-Pesso, L. (2011), ‘Extraneous factors in judicial decisions’, PNAS, 108:17, pp. 6889–92.

5 See Weinshall-Margel, K. and Shapard, J. (2011), ‘Overlooked factors in the analysis of parole decisions’, Proceedings of the National Academy of Sciences of the United States of America, 108:42, p. E833.

6 Glockner, A. (2016), ‘The irrational hungry judge effect revisited: Simulations reveal that the magnitude of the effect is overestimated’, Judgement and decision making, 11:6, pp. 601−10.

7 Chen, D. L. and Philippe, A. (2020), ‘Clash of Norms: Judicial Leniency on Defendant Birthdays’, TSE Working Paper, 18:934, pp. 1−27.

8 For example, machine learning techniques can be used to predict judicial decisions based on very large datasets of previous cases. The generated models can flag inconsistencies in judicial behaviour, and situations in which biases may hold greater sway. The accuracy of these models will depend on the case characteristics or judicial attributes. See Chen, D. L. (2019), ‘Machine Learning and the Rule of Law’, Law as Data, Santa Fe Institute Press (ed. M. Livermore and D. Rockmore),16, pp. 1−12.

Download

Related

Ofgem’s RIIO-ED3 SSMD: what next for GB electricity distribution networks

On 21 May 2026, Ofgem published its Sector Specific Methodology Decision (SSMD)1 for the forthcoming RIIO-ED3 (ED3) price control for GB electricity distribution network operators (DNOs). We explore some of the key themes in the Decision, ahead of the upcoming business planning period and the expected publication of… Read More

Behavioural economics: how does decision-making impact business and policy?

Behavioural economics is all around us — from competition rulings, like the Google Search ruling in the US, to EU regulations targeting online dark patterns. It affects how we combat consumer manipulation, design policy, and even understand the behaviour of music fans. In this latest episode, Helen Jenkins is joined… Read More