How can consumers be persuaded to shop around?

In June 2016, Oxera and the Nuffield Centre for Experimental Social Sciences (CESS) published the results of an experiment testing the effectiveness of different ‘prompts’ in encouraging consumers to shop around. The study, commissioned by the UK Financial Conduct Authority (FCA), found that personalised messages were the most effective in stimulating product comparisons, while generic messaging appealing to social norms also had a significant impact.

This article is based on Oxera and the Nuffield Centre for Experimental Social Sciences (2016), ‘Increasing consumer engagement in the annuities market: can prompts raise shopping around?’, prepared for the Financial Conduct Authority, June.

Regulators and competition authorities in a range of sectors have found that a lack of shopping around and switching often means that consumers do not achieve the best available deal for them. In the UK, for example, this has been seen in markets such as general insurance, cash savings and energy supply.1

The reluctance to search and switch is driven not only by a fully rational assessment of the costs and benefits of doing so, but also by consumers’ behavioural biases. Such biases include loss aversion,2 present bias,3 mistaken beliefs (about the benefits and costs of switching),4 and faulty ‘heuristics’5 (in the face of information overload). Prompts—i.e. framing information in a certain way to nudge people into changes of behaviour—are used in a number of sectors to mitigate these behavioural biases. As discussed in this article, the evidence shows that prompts can be effective at improving consumer outcomes.

For example, text alerts for personal current account customers about their overdrafts have been found to reduce overdraft usage, the average overdraft charges paid, and the average ‘buffer’ that consumers keep on their personal current accounts.6 Similarly, prompts to payday loan customers can reduce payday loan uptake, although there are large differences in the effectiveness of specific prompts.7 This example indicates that the design of a prompt, and its content, medium and formatting, are critical to its effectiveness in nudging consumers to consider whether they are making good choices.

However, intuition may not be the best guide to how effective a prompt will be in practice. Context is important, and it is often useful to test proposed remedies for such behavioural biases through experiments—whether in the laboratory, online or in the field.

The UK annuities market

The FCA was particularly concerned about a lack of shopping around in the annuities market. An annuity is a financial product that consumers may purchase using their pension pot when they retire. It provides the purchaser with the security of a fixed income stream over the remaining years of their life.

In the UK annuities market, the FCA found that competition was not working well for consumers.8 Of particular concern was consumers’ lack of awareness of their options at the point of retirement, and the fact that they tended not to shop around for annuities—with 60% purchasing an annuity from their current pension provider.9 Many consumers were found to forgo significant gains in retirement income by not shopping around for an annuity in the open market.

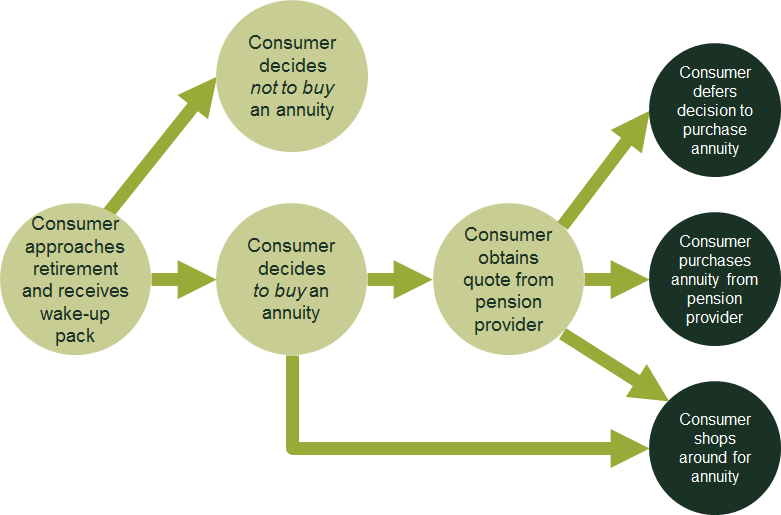

Figure 1 outlines the typical process that a consumer goes through when choosing an annuity. Around six months before retirement, the consumer receives a ‘wake-up’ pack from their pension provider setting out the options post retirement, with information about various retirement products (such as annuities, income drawdown, and taking a lump sum). If the consumer decides to purchase an annuity, they can request a quote from their pension provider or go directly to an alternative provider. If the consumer applies for a quote, the provider will send out a written communication setting this out.

Figure 1 Consumer journey at retirement

The FCA has considered various forms of information provision at this quotation stage to encourage consumers to shop around for their annuity. These range from a requirement for firms to make it clearer how their particular annuity quote compares with those of other providers operating in the open market, through to setting out more general information about how other consumers have benefited from shopping around.

The Oxera/CESS study

To assess the impact of such prompts on consumers’ propensity to shop around and switch, Oxera and the CESS conducted an experiment focusing on the point when consumers receive an annuity quote from their pension provider (the box below describes the different types of experiment that can be used). The experiment tested the impact of different prompts in the provider’s written communication in an online setting, among 1,996 people aged 55–65.10 It sought to mimic certain aspects of the consumer’s journey as they approach retirement. As is standard practice, participants were paid—both through a flat fee for their participation, and according to how they performed across the tasks.

Several innovative features were incorporated into the experimental environment in order to replicate, to some degree, the consumer inertia observed in the real world. The aim of the prompts was to counteract the inertia and encourage shopping around. This is discussed further below.

The online setting provided the opportunity to study how prompts can influence various types of behaviour, in addition to switching provider. For example, the study observed not only whether people shopped around, but also the time they spent doing so. In contrast, a field trial would only be able to show whether or not the consumer switched from their pension provider.

Randomised controlled trials

A randomised controlled trial (RCT) is a type of experiment that measures the impact of a policy by randomly allocating participants to two groups: those who are subject to the policy (the ‘treatment’ group), and those who are not (the ‘control’ group).

RCTs offer the most rigorous way to test the impact of a policy. Random assignment to treatment and control groups maximises the likelihood that the two groups will be as similar, on average, as possible, thus attributing any differences in outcomes to the policy intervention rather than the characteristics of the participants in each group or other external factors.

There are two broad types of RCT: field trials and lab trials. In a field trial, different interventions are tested in a real-life setting. This is arguably the ‘gold standard’ for an experiment, as it measures the impact of a policy on real decisions. However, costs and practical considerations may hamper the feasibility of conducting a field trial.

Lab trials aim to mimic a real-world situation in a stylised setting, either online or in a physical laboratory. They can test the impact of a policy when a field trial is not possible or practical. Furthermore, the stylised setting allows the experimenter to capture the key elements of a particular consumer decision, stripping the problem down to the basic mechanisms. For example, to explore how complex pricing affects decision-making, two hypothetical products with slight variations in their pricing features can be tested against each other to see how consumers respond.

Lab experiments also allow us to observe consumer decision-making behaviour. For example, in real life it is not always clear how consumers shop around, only whether they have done so. A lab experiment can help to reveal how much time consumers spend shopping around and how many comparison sites they visit. The Oxera/CESS experiment was piloted both in a physical laboratory setting and online, with the final experiment undertaken online.

Source: Oxera.

The experiment

Designing the prompts

The experiment tested the effectiveness of the following three main prompts that provided information on the potential gains from shopping around.

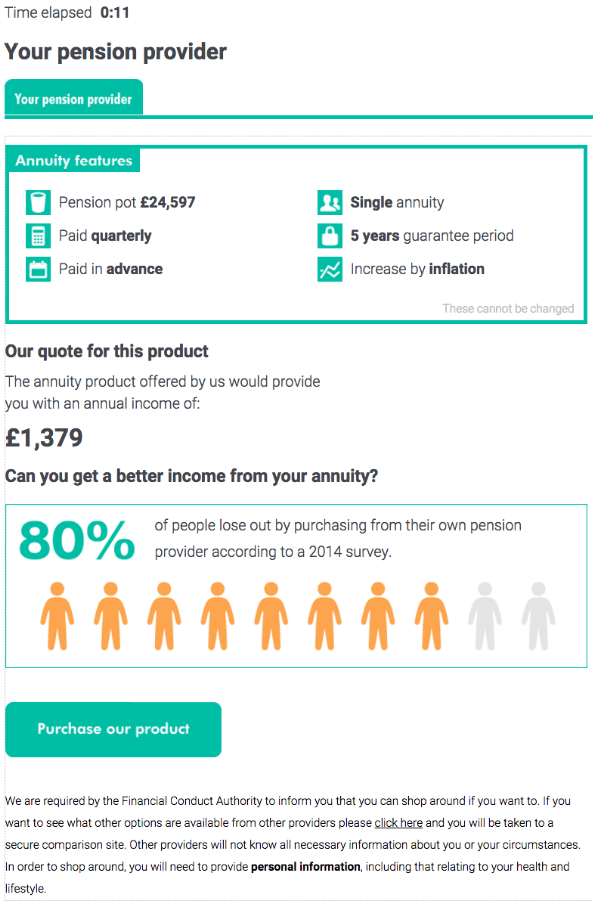

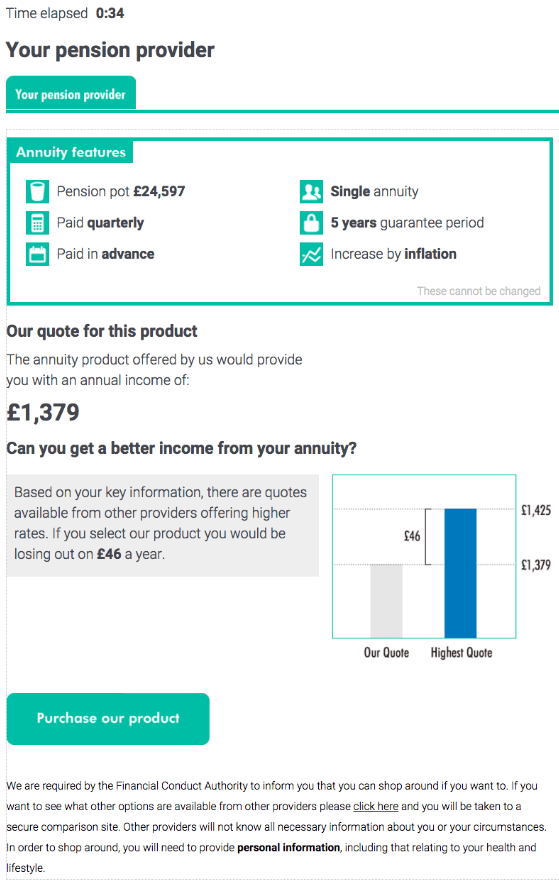

- Call to action—the participants were told that ‘80% of people who fail to switch from their pension provider lose out by not doing so.’ This text was accompanied by a visual representation of the 80% figure.

- Personalised quote comparison—the participants were provided with the best quote they could obtain by shopping around. The difference between that quote and the pension provider’s quote was highlighted. The text information was complemented by a bar chart comparison of the two quotes.

- Non-personalised quote comparison—the participants were provided with an estimate of how much they could obtain by shopping around. However, the information emphasised that this was an estimate only, and that participants might obtain a higher or lower quote if they shopped around.

The first two prompts are shown in Figure 2.

Figure 2 Call to action and personalised prompts

In addition, the experiment tested a variation within the personalised and non-personalised quote comparison groups by including information on the equivalent lifetime gains that consumers can obtain by shopping around and switching.

All prompts emphasised that consumers were likely to lose out on prospective gains by not shopping around—thus appealing to a form of loss aversion. Each prompt then sought to reduce customer inertia. An important issue is the extent to which prompts can educate consumers about the benefits of shopping around as opposed to providing simple illustrations that require little deliberation.

For example, the call to action was more generic. It focused on simplicity, and contained a prominent visual image to encourage social comparison. The personalised comparison was more bespoke, with a visual comparison between the best external quote available and the internal provider’s offering. The non-personalised comparison contained the same financial information as the personalised quote, but with additional text explaining that this amount was an estimate that was not guaranteed. The non-personalised and personalised quotes were also varied to include lifetime gains, to test whether including this higher figure would induce more shopping around.

The effectiveness of these prompts was compared with a simple message provided to the control group, which stated that it was not too late to shop around for quotes from other providers. The statement was based on an actual letter from a pension provider sent to one of its clients.

The experiment also tested whether the absolute and relative sizes of the gains from shopping around had an effect on the decision to do so.

Instilling and counteracting inertia

A challenge with any lab experiment is that people’s behaviour in the artificial experimental environment may not completely match what they would do in the real world. At the same time, lab experiments simplify the given setting in order to isolate and explore a particular issue. We also introduced innovations to the experimental environment, to place participants in the appropriate mindset, and to replicate certain aspects of the real-world environment.

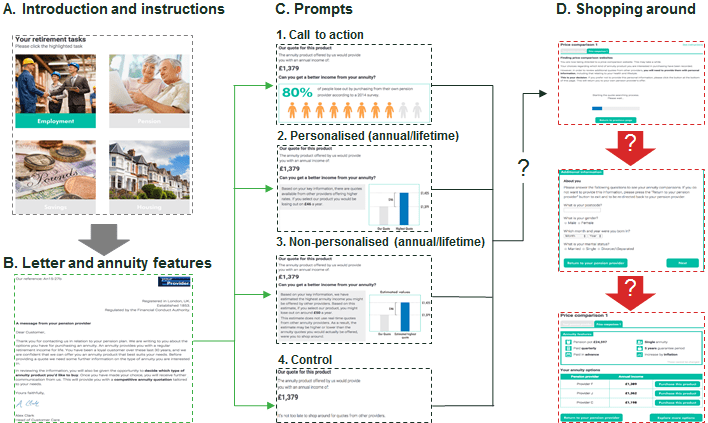

First, we did not want participants to think that they had to please the experimenter. They were placed in a scenario where they had to make a series of decisions on their retirement income sources. In addition to their pension annuity, they were asked to make decisions on: (i) part-time employment; (ii) private savings; and (iii) income from their home (see part A in Figure 3 below). This was done to shift attention away from the pension annuity and to reduce the risk of participants ‘playing the game’ and shopping around for annuities to comply with what they thought was expected of them.11

Another (related) challenge was to induce inertia in the artificial experimental setting, in particular before the information prompts were revealed. In an initial online pilot of 500 participants (and a subsequent laboratory test), we found that the majority of consumers shopped around. This did not match with reality, as people were behaving ‘too rationally’. Additional features were therefore included—prior to the information prompt reveal—to increase participant engagement with the pensions task in a way that favoured the pension provider.

In the real world, a formal ‘inside quote’ by a pension provider for an annuity is provided to the consumer in writing. This is likely to provide an initial-contact advantage to the pension provider. The recipient would also need to switch to a computer to explore ‘outside quotes’ available on the open market, which might result in additional inertia due to the extra task involved. We needed a way of mimicking the resulting inertia in a purely online environment.

In the experiment the task began with a personalised and branded letter from the pension provider, which had some features that a provider might use in real life (see part B in Figure 3). The participant was asked to make choices about various annuity features (e.g. single vs joint life annuity) before receiving the provider quote. These measures were designed to induce a status quo bias—they raised the profile of the incumbent pension provider as the default, and ‘customising’ this default by choosing the annuity features required cognitive effort.

To focus the experiment, the prompt was applied at the point when the participant received the quote from their pension provider (part C in Figure 3). This was accompanied by a warning that the participant would need to provide personal and medical information in order to shop around.

The participants then had the choice of purchasing from their provider or shopping around for alternatives. If they chose the latter, they were directed to a questionnaire asking for personal/medical information, as on a real price comparison website (PCW) (part D in Figure 3). They were then directed to a PCW with three quotes. At that point, the participant could choose to purchase a product from one of the three providers; proceed to another PCW to review more quotes (which would require more personal/medical information); or return to their current pension provider. A total of five PCWs were available.

Figure 3 Main stages of the pension task

How did the consumers behave?

The key questions concerned whether a consumer clicked to shop around after they received the prompt, and the relative effectiveness of the different prompts. Propensity to shop around was also captured using additional metrics, including the degree of intensity with which consumers shopped around (e.g. the number of quotes they reviewed); whether they switched; and how much they gained (or lost) by switching.

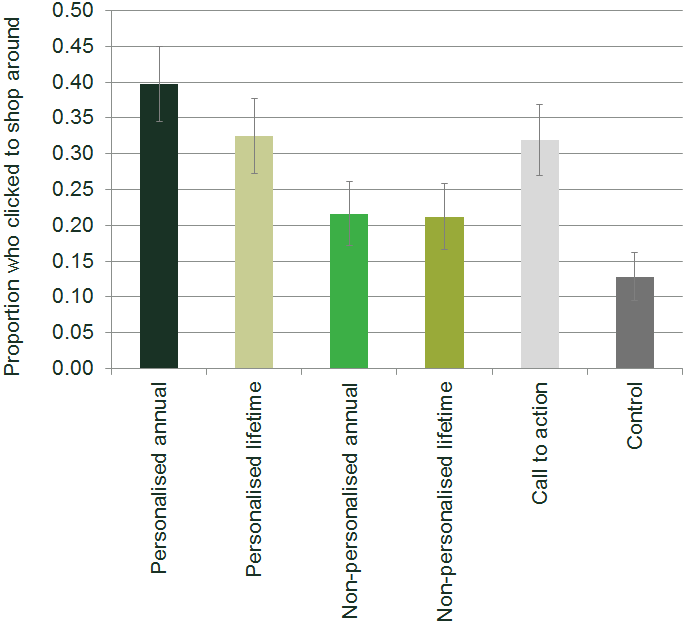

All of the treatment prompts had a significant impact on consumers’ decisions to shop around. Figure 4 shows the proportions of participants who clicked to shop around for the different groups. The impact of the prompts, measured as the difference between the treatment group and the control group, ranged from around 8 percentage points for the non-personalised lifetime prompt to 27 percentage points for the personalised annual prompt.

Figure 4 Proportion of consumers who clicked to shop around

Source: Oxera.

The personalised annual prompt triggered the highest rate of shopping around, followed by the call to action (the difference between the two was 8 percentage points, and statistically significant). The two prompts influenced consumers in different ways: the personalised comparison offered information that was reliable and bespoke to the consumer; and the call to action offered simple, easily digestible information accompanied by a strong social-comparison visual.

The non-personalised prompt caused significantly less shopping around than the other two. This may be because the prompt contained too much text, leading to information overload and dilution of the message, and thus encouraging consumers to stick to the status quo. Alternatively, as it highlighted the uncertainty in the gains of shopping around, it may have discouraged consumers.

Including information in the text about the lifetime gains was not found to improve shopping around. The analysis also found no evidence of an impact of pension pot size per se on the participants’ propensity to shop around. There was, however, some evidence to suggest that the relative gains from shopping around (i.e. the gains relative to the pension provider quote) did have an impact. This suggests that consumers are more influenced by the relative (percentage) than the absolute (monetary) gains of shopping around. This is known as ‘reference dependence’, with the reference point here being the level of the pension provider’s offer.

These results were broadly consistent with those of other factors tested in the experiment, such as whether the consumer persisted in their effort to shop around (measured by whether the participant viewed at least one PCW), and switching rates.

The demographic information provided by the participants revealed some other interesting findings. Participants were found to respond differently to each of the prompts based on their gender, income and education:

- women were more likely than men to shop around (by 4 percentage points), and were also more likely to respond to the personalised annual comparison (by 12 percentage points);

- high-income participants were more likely to shop around than low-income participants when assigned to the non-personalised lifetime group (by 10 percentage points);

- highly educated participants were more likely to shop around than less educated participants when assigned to either the control group or the non-personalised group (by 8–13 percentage points);

- participants who answered the following follow-up survey question correctly were more likely to have shopped around—‘You buy a bat and a ball for £1.10. The bat costs £1 more than the ball. How much does the ball cost?’

The last point may be correlated with shopping-around behaviour, as it relates to evaluating one’s options carefully.

Concluding observations

A number of important lessons can be drawn from the Oxera/CESS experiment. The first is that information prompts can induce consumers to shop around. In our study, personalisation worked best, although a strong generic visual including a social comparison was also effective. The non-personalised prompt worked less well. This may be because complicating the message by introducing additional text can cause information overload or cast doubt on the likelihood of better offers being available.

Relative gains (the percentage gain, comparing the offer of the pension provider with those available on the open market) were found to be more important than absolute (monetary) gains.

The findings of this experiment are important beyond the annuities and financial services sector, as concerns about shopping around (or a lack of it) are shared by regulators and competition authorities across markets. The Oxera/CESS study brought significant innovations in inducing and counteracting consumer inertia, which will be of wider relevance to lab experiments elsewhere.

1 For example, see Financial Conduct Authority (2015), ‘Encouraging consumers to act at renewal: Evidence from field trials in the home and motor insurance markets’, Occasional Paper No. 12; Financial Conduct Authority (2015), ‘Cash savings market study report: Part I: Final findings Part II: Proposed remedies’, MS14/2.3; Competition and Markets Authority (2015), ‘Energy market investigation: Summary of provisional findings report’, July; Oxera (2016), ‘Please leave a message: has telecoms become too complex for consumers?’, Agenda, August; and Oxera (2016), ‘Energy market investigation: what next for the GB retail energy market?’, Agenda, June.

2 This refers to the fact that people would rather avoid losses than acquire gains. Loss aversion is partly due to the ‘endowment effect’, whereby people ascribe more value to their own possessions simply because they own them. This in turn suggests that people often demand much more to give up an object than they would be willing to pay to acquire it.

3 This is the tendency to place too much weight on immediate gains at the expense of one’s long-term goals. For example, consumers may agree that they should switch energy provider to save money in the long term, but many are unwilling to give up their time today to do so.

4 Consumers may mistakenly believe that there is little to be gained by switching, and/or that the process of searching and switching will be unduly complex and time-consuming.

5 Heuristics are mental shortcuts used to make decisions. For example, people may use simple rules of thumb to help them deal with a complex decision—‘if I withdraw 4% each year from my pension pot, this will last me for retirement’. Although heuristics can save time and effort, especially when dealing with complex problems, they can sometimes be imperfect and open to exploitation by firms.

6 Financial Conduct Authority (2015), ‘Message received? The impact of annual summaries, text alerts and mobile apps on consumer banking behaviour’, Occasional Paper No. 10, March, pp. 4–5.

7 Bertrand, M. and Morse, A. (2011), ‘Information disclosure, cognitive biases, and payday borrowing’, The Journal of Finance, 66:6, pp. 1865–93.

8 Financial Conduct Authority (2015), ‘Retirement Income Market study: final report – confirmed findings and remedies’, March.

9 Data from the FCA for the period July–September 2015 shows that 64% of consumers stayed with their current provider. See Financial Conduct Authority (2015), ‘Retirement Income Market Data: July – September 2015’.

10 For practical reasons, a field trial was not possible in this case. The age range represented the section of the population who can potentially purchase an annuity.

11 This is known as the ‘experimenter demand effect’. See Zizzo, D.J. (2010), ‘Experimenter demand effects in economic experiments’, Experimental Economics, 13:1, pp. 75–98.

Download

Related

Economics of the Data Act: part 1

As electronic sensors, processing power and storage have become cheaper, a growing number of connected IoT (internet of things) devices are collecting and processing data in our homes and businesses. The purpose of the EU’s Data Act is to define the rights to access and use data generated by… Read More

Adding value with a portfolio approach to funding reduction

Budgets for capital projects are coming under pressure as funding is not being maintained in real price terms. The response from portfolio managers has been to cancel or postpone future projects or slow the pace of ongoing projects. If this is undertaken on an individual project level, it could lead… Read More